SystemsSec 2018W Lecture 1

Notes

Class 1, January 8

About the course:

Attendance is strongly recommended as lectures will not be posted online (only the wiki notes will be posted online).

In order to succeed, you need to come to class. Things will be discussed, and you need to be present.

Grading Criteria

- 20% Midterm

- 30% Final

- 10% Participation

- 20% Experiences

- 20% Assignments (4)

Midterm & Final

Short answer questions, possibly a few long-answer questions.

Participation

Being present in the classroom, taking notes for the class, raising your hand, discussing things. There is also a slack instance (click here to join) that you can participate in.

If for some reason participation will be a problem for you, email the professor now to work it out.

Experiences

There are 2 portions to the experiences section, reading and tools.

Readings – Make a diligent effort to understand the reading before coming to class. Not a summary. What was your interaction with the reading? What are your thoughts about the material covered? Did you have any difficulties following along?

Tools – Computer Systems Security is fundamentally an applied field. It is tied to tools. Applied learning is important. Some exercises will be provided, but other things you will come across yourself (ie, try to set up a firewall, or play around with iptables, you don’t have to succeed). Write a tool response. Plan on sitting down a couple of times and doing some hacking. It is important to get your hands dirty. To start, pick something that you can handle, and maybe ramp it up as the term goes along.

Assignments

The assignments will be in the style of the midterm and final, and will let you know how prepared you are for the exams. There will be two assignments before the midterm and two more after the midterm. They will be submitted through CULearn.

The material covered today:

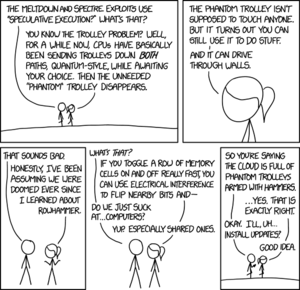

In the news recently: Meltdown and Spectre security flaws

Meltdown in the Intel version, Spectre is the more general version. Basically every modern CPU that has high performance is affected, stems from a problem with processor design in which the strategy used to increase performance in modern processors allows for information leakage.

Inherently, software programs and processes don't trust each other (and they shouldn't), but this flaw means that the barriers between them aren't fixed and that you can potentially read across them.

This is a timing attack, the basis of these attacks is that the time to compute depends on the data that you are computing. By knowing how long something takes to compute you can figure out what is being computed. There was previously a well known timing attack on public key encryption, which was solved by responding to all requests in the same constant time.

Meltdown and Spectre exploit branch predictors (ie, the processor speculates at which branch of the code will be run next and “runs ahead”. If it predicts correctly, there is a performance advantage). However, flaws were found that enabled kernel memory to be read, or a virtual machine to read data from another virtual machine running on the same processor. This particularly affects cloud computing.

These types of flaws come about because no one was thinking about the design from a security point of view.

System Security is difficult. Attackers find flaws, defenders try to fix them. This happens in real systems, with enormous complexity. Theoretically we can design perfectly secure systems, but attackers will keep finding flaws. This game, as it is today, is weighted towards attackers. Re-balancing the game would require radical ideas.

A (noncomprehensive) list of some security tools and methods:

The purpose of this list is to show what a vast area computer security is, not making a list of everything that will be covered.

- Firewalls

- Antivirus/Antimalware

- Network monitoring/NIDS

- Reverse engineering.

- Cryptography (encryption/digital signing) (for system security, encryption is a tool of last resort)

- Air gaps

- Social Engineering

- (D)DoS

- White list

- Black list

- One way info-gate

- Virtual machines

- Encapsulation

- Virtual memory

- Formal verification

- Randomization (ASLR)

- Passwords

- Captchas

- Biometrics

- Location monitoring

- Mandatory access control (ie SELinux, very inconvenient)

- Discretionary access control (traditional Unix, Windows…)

- Automatic memory management (garbage collection)

- Static analysis

- Dynamic analysis

Security can affect just about any area of computer science. If there is a branch that doesn’t appear to be affected by security, it's because someone just hasn’t thought about it for long enough. This course isn’t about a specific tool or method, although many will be touched on. Primarily, we want to look at how to think about problems so that you see security issues. What can I do as an attacker? What can I do as a defender.

There are always benefits and costs to any security decision, by strengthening security in one way, you can weaken it in another. This is important if you can’t risk lockouts and downtime, where having passwords could cause problems. For example, the US Air Force had all the nuke codes set to 0000000...

If you make usability too difficult, users can (and will) find ways to bypass your security measures. Security is always a secondary concern. The primary concerns of users are the tasks that they are using the computer systems to complete. The most secure system is one that is off, in a locked room in a secure facility. However, that system is also completely useless.

Even if you do not become a computer security professional, you will design systems and make decisions that have security implications.

Reverse Engineering

Picked from the list at random to discuss

- What is it?

- Normal engineering process would be Design -> code -> system.

- Reverse engineering is reversing that process. Looking at the system to figure out the code and the design.

- Who?

- Attackers

- analyzing defenses

- If you can figure out how it works, then you can find weaknesses and exploit them.

- analyzing defenses

- Attackers

You become an expert safecracker by learning about safes. In order to find flaws in systems, you must have a deep knowledge of those systems. What an attacker wishes to attack he must master, and by finding the flaw, the attacker proves his knowledge. It is like solving a puzzle. That is what drives the people developing these attacks. The negative impacts are often secondary.

- Defenders

- Analyze defenses like attackers

- Analyze attacks

- (ie, figure out what a botnet does and how it works)

- Botnet – illegal cloud computing.

- Defenders

DRM – Digital Rights Management

- People have been using reverse engineering crack DRM since DRM was released

- Interesting thing about DRM – it works to protect the content from the legitimate user that you want to have the content.

- Most secure current DRM- iOS. It is currently very difficult to crack (or “jailbreak”). In fact, it may even be “effectively unbreakable” because the cost and time involved in breaking it isn’t worth it.

- Jailbreaking iOS used to be very popular, as it allowed users to use their iPhones in ways that Apple didn’t allow. However, it would also negatively impact the security of the device.

- The jailbreak community showed Apple where the security flaws in their devices were found. Apple could then fix the flaws. The community would find new flaws, and Apple would fix them.

- This evolution or “trial by fire” is the only way that security gets strong. No theoretical security can be trusted until it has had people try to crack it.

Today, attacks get put into usable software and distributed quickly. They spread fast.

Nation-states pay lots of people to reverse engineer systems and find the security holes. They do it in secret, but they can’t keep secrets, so the attacks they create get leaked.

The code of much modern malware that is causing problems has been written by nation-states.

We cannot make any system perfectly secure, but we don’t build systems under that assumption. We build systems that store large amounts of important data (how much data does Facebook have? Google? Governments?). We assume that we can do this securely, but we can’t.