DistOS-2011W Distributed Data Structures: a survey

(A PDF version of the full document can be found here)

Abstract

Access to stored data in main memory is much faster than access to local disks/hard drives. Well maintained data structures can utilize such access. In addition, the performance of the memory fetch, dispatch and store operations on Distributed Operating Systems would augment if they exploit a highly scalable distributed data structures. This paper describes the concept of Distributed Data Structures (DDS) which has been made viable by multicomputers. Scalability aspects and their implementation are reviewed for a chosen subset of classic data structures. Open research issues for SDDS are also discussed.

Introduction

Multicomputers are collections of autonomous workstations or PCs on a network (network multicomputers), or of share-nothing processors with local storage linked through a high-speed network or bus (switched multicomputer)<ref> A. S. Tanenbaum, Distributed Operating Systems. Prentice Hall, 1995.</ref>. Recent advances in multicomputers showed that they became in need for new data structures that scale well with the number of components to make effective use of their aggregate performance <ref> W. Litwin, R. Moussa, and T. Schwarz, “Lh*RS —a highly- available scalable distributed data structure,” ACM Trans. Database Syst., vol. 30, pp. 769–811, September 2005. [Online]. Available: http://doi.acm.org/10.1145/1093382.1093386 </ref>. In addition, low latency interconnected mulicomputers allow significant flexibility to the size of data structure without suffering from space utilization or access time penalties <ref>V. Gupta, M. Modi, and A. D. Pimentel, “Performance evaluation of the lh*lh scalable, distributed data structure for a cluster of workstations,” in Proceedings of the 2001 ACM symposium on Applied computing, ser. SAC ’01. New York, NY, USA: ACM, 2001, pp. 544–548. [Online]. Available: http://doi.acm.org/10.1145/372202.372458</ref>.

The rest of this paper is organized as follows. Section 2 presents the work done to implement a distributed version of classical data structures including trees, sets, lists, graphs and queues, binary indexed trees (BITs) and distributed hash tables (DHT). The described work hide the distinction between local and distributed data structure manipulation, simplifying the programming model at some performance cost. Section 3 focuses on scalability issues in distributed data structure models that are based on linear hashing. Section 4 provides a performance parameters dealing with SDDS research outcomes. Finally, in section 5, a conclusion is drawn summarizing the presented work.

Distributed Versions of Classic Data Structures

Hash Tables

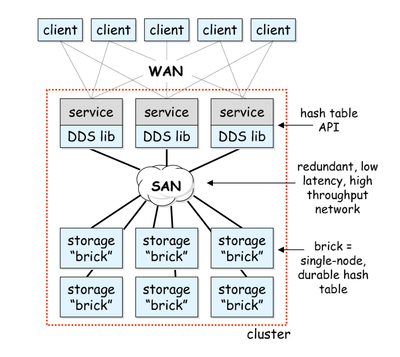

A distributed hash table can provide more scalability of throughput and capacity of data more than a locally deployed one. An architecture for a distributed hash table was proposed in <ref name="ref3">S. D. Gribble, E. A. Brewer, J. M. Hellerstein, and D. Culler, “Scalable, distributed data structures for internet service construction,” in Proceedings of the 4th conference on Symposium on Operating System Design & Implementation - Volume 4, ser. OSDI’00. Berkeley, CA, USA: USENIX Association, 2000, pp. 22–22.</ref> which consisted of the following components:

- Client (a web browser for example): a component that is totally unaware of the underlying DDS infrastructure. i.e., DDS parts don't runs on a client.

- Service: software processes (each is called a service instance) that are cooperating together.

- Hash table API: the boundary between DDS libraries and their service instances.

- DDS library: a library (in Java) that presents the API of the hash table to services.

- Brick: the component that manages durable data. It consists of a lock manager, a buffer cache, a persistent chained hash table implementation and network stubs as well as skeletons for remote communication. Each CPU in the distributed clusters runs one brick.

The partitioning and replication operations work by horizontally partitioning tables to spread data and operations across bricks. Each brick saves a number of partitions of each table in the system and when new nodes are added to the cluster, this partitioning is altered so that the data is spread onto the new node. For avoiding unavailability of portions in the hash table due to node failures, each partition in the hash table is replicated on more than one cluster node. Two problems arise, finding a partition that manages a specific key, and determining the list of replicas in partitions' replica groups. To solve these, the DDS libraries consult two metadata maps (namely data partitioning map DP and replica group membership map RG) that are replicated on each node of the cluster. That pair of metadata maps exist on each hash table in the cluster. The DP map controls the horizontal partitioning of data across the bricks. Whereas the RG map returns a list of bricks that are currently working as replicas. An asynchronous event-driven programming style builds all components of the distributed hash table. Each layer in the hash table is designed so that only a single thread executes at a time. In this manner, distributed hash tables succeed in simplifying the construction of services which exploited the data consistency and scalability of the hash table <ref name="ref3" />.

Another method of distributing hash tables is by distributing their insert and lookup operations (i.e., calling them locally on each processor) <ref name="ref4"> S. Chakrabarti, E. Deprit, E.-J. Im, J. Jones, A. Krishnamurthy, C.-P. Wen, and K. Yelick, “Multipol: A distributed data structure library,” EECS Department, University of California, Berkeley, Tech. Rep. UCB/CSD-95-879, Jul 1995. [Online]. Available: http://www.eecs.berkeley.edu/Pubs/TechRpts/1995/5483.html </ref>. In this method, such local operations will allow each processor to process its local portion of the hash table. No synchronization is to be performed on such local operations and also no definition for any interaction scheme between the concurrent distributed operations. In addition, it distributes the buckets over the processors. In case of collision, chaining is used to resolve it. Moreover, the authors in <ref name="ref4" /> demonstrated two performance techniques which are a one-way communication and latency masking. They tested their proposed model on a puzzle where their algorithm switches iterations between a defined set of states and a new generated set of valid moves, exploring around 100,000 positions. They compared three implementations for their model:

- A simple blocking algorithm where each processor iterates only over its local set and executes insert operations into the hash table. On a 32-node CM5, the process took around 28 seconds.

- With pipelining the inserts (where each processor must wait for the synchronization counters), the running time was reduced to approximately 16 seconds.

- Finally, by eliminating the acknowledgment traffic and using a global synchronization point, the process took 11 seconds.

To conclude, this multi-ported nature of the hash table trades powerful semantics for good performance.

The main difference between the later method and the former one is not in the management of data but in the manipulation of the metadata. The former method is deemed having higher data availability due to the replication scheme that is implemented. However, it is the hardware resources, software achievements tradeoff.

Replicated List

Replicated lists have great similarities to hash tables <ref name="ref4" />. They also have an iterator for local element traversal. One difference between both approaches arises from the fact that in hash tables, local iterators produce subsets of the elements that are disjoint, whereas the iterator of the replicated list does not. In <ref name="ref4" />, the authors proposed two performance improvements on replicated lists. The first is by aggressive replication of the list. This is acceptable when the list is used to store ONLY object IDs or global pointers. The second is by operating the list over a distributed object library that manipulates the global address space. Obviously, this design of replicated lists works better with large objects that come in relatively smaller sets.

Octree

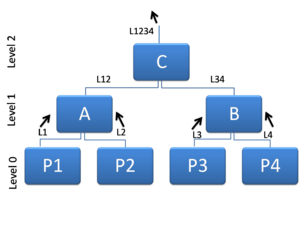

Octree data structure represents 3D objects as a disjoint union of cubes of varying sizes. Because of their simplicity and non-semantic properties, octrees' structure is easy to manipulate <ref name="ref5">K. Yamaguchi, T. Kunii, K. Fujimura, and H. Toriya, “Octree-related data structures and algorithms,” Computer Graphics and Applications, IEEE, vol. 4, no. 1, pp. 53 –59, Jan. 1984.</ref>. The authors in <ref name="ref6">P. Sojan Lal, A. Unnikrishnan, and K. Poulose Jacob, “Parallel implementation of octtree generation algorithm,” in Image Processing, 1998. ICIP 98. Proceedings. 1998 International Conference on, Oct. 1998, pp. 1005 – 1009 vol.3. </ref> have proposed a parallel implementation of octrees for the sake of medical purposes. Their proposal focused on the bottom up (figure 2) algorithm where they later ported it to a Transputer.

Upon the implementation of a distributed version of octrees, two challenges arise. The first is how to disseminate the octree nodes between processors while retaining good load balancing and locality. The second is how to decrease the cost of remote node accessing for higher efficiency <ref name="ref4" />. It can be argued that an octree needs to be partitioned across processors to allow equal number of inter-particle interactions in order to achieve good load balancing <ref name="ref4" />. Besides, it is highly preferable that tree distribution among processors occurs such that each processor gets a complete subtree(s). This will help in preserving locality. Obviously, this approach can guarantee minimum number of remote accesses for the computation entirety <ref name="ref4" />.

Distributed Search Trees (DST)

No class of the usual single site leaf search trees can guarantee a logarithmic bound on the path length <ref name="ref7">B. Krll and P. Widmayer, “Balanced distributed search trees do not exist,” in Algorithms and Data Structures, ser. Lecture Notes in Computer Science, S. Akl, F. Dehne, J.-R. Sack, and N. Santoro, Eds. Springer Berlin / Heidelberg, 1995, vol. 955, pp. 50–61, 10.1007/3- 540-60220-8 50. [Online]. Available: http://dx.doi.org/10.1007/3-540-60220-8 50 </ref>. However, research on DSTs have attracted considerable attention, and lots of seminal work has been suggested based on the distributed random binary search trees <ref>B. Kroll and P. Widmayer, “Distributing a search tree among a growing number of processors,” in Proceedings of the 1994 ACM SIGMOD international conference on Management of data, ser. SIGMOD ’94. New York, NY, USA: ACM, 1994, pp. 265–276.[Online].Available:http://doi.acm.org/10.1145/191839.191891</ref> and the distributed variant of B-trees <ref>W. Litwin, M.-A. Neimat, and D. A. Schneider, “Rp*: A family of order preserving scalable distributed data structures,” in Proceedings of the 20th International Conference on Very Large Data Bases, ser. VLDB ’94. San Francisco, CA, USA: Morgan Kaufmann Publishers Inc., 1994, pp. 342–353. [Online]. Available: http://portal.acm.org/citation.cfm?id=645920.672842</ref>. The authors in <ref name="ref7" />. have proofed that the distributed height of a DST that results from a stable distribution for m keys is <math>\Omega(\sqrt{m})</math> for the worst case. They have also proposed a method that builds up a stable distribution satisfying the bound of <math>O(\sqrt{m})</math> on the height of its DST.

An interesting implementation for a DST was proposed by the authors in <ref>A. Di Pasquale and E. Nardelli, “A very efficient order preserving scalable distributed data structure,” in Database and Expert Systems Applications, ser. Lecture Notes in Computer Science, H. Mayr, J. Lazansky, G. Quirchmayr, and P. Vogel, Eds. Springer Berlin / Heidelberg, 2001, vol. 2113, pp. 186–199, 10.1007/3-540-44759-820.[Online]. Available: http://dx.doi.org/10.1007/3-540-44759-8 20 </ref> where each server holds a unique set of keys which has a fixed capacity b. If a server gets added more than b keys, it is said to be in overflow, where it splits to two subtrees. In principle, a virtual distributed tree is build from this splitting operation. Needless to say, for a sequence of m insertions, there will be at most <math>\lfloor\frac{2m}{b}\rfloor</math> splits.

SD-Rtrees

Scalable-Distributed-Rtree (SD-Rtree) is a scalable distributed version of Rtrees <ref name="ref11">C. du Mouza, W. Litwin, and P. Rigaux, “Large-scale indexing of spatial data in distributed repositories: the sd-rtree,” The VLDB Journal, vol. 18, pp. 933–958, 2009, 10.1007/s00778-009-0135-4.[Online].Available: http://dx.doi.org/10.1007/s00778-009-0135-4 </ref>. It uses a distributed balanced binary tree that can scale to any number of storage servers via splitting the overloaded ones. The structure of an SD-Rtree is considered a hybrid approach between an AVL tree and Rtree (where the principle of the data organization is taken). Their kernel structure is defined as a binary tree that is mapped to a set of servers and satisfies the following properties:

- All but leaf nodes refers to two children

- All but leaf nodes maintain left and right directory rectangle which are the bounding boxes of the left and right subtrees respectively.

- All leaf nodes store a subset of the indexed objects.

- The height of the two subtrees at any node differs by one at most.

An internal node maintains the ID of its parent node. Each link carries four pieces of information which are: ID, that ID of the server that stores the referenced node, height, the height of the subtree rooted at the referenced node, dr, the directory rectangle of the referenced node and type, either data or routing. Both data and routing define and overlapping coverage and the ID of the parent routing node. Nevertheless, data nodes define the local data sets and the underlying directory rectangle. Whereas the routing node defines a description of the routing node and links to the left and right children.

Balancing is preserved via rotations during a bottom-up height adjusting traversal. In SD-Rtrees particularly, balancing exploits the fact that the rectangle containment relationship allows for more freedom for reorganizing an unbalanced tree.

The authors in <ref name="ref11" /> claim that SD-Rtrees constitute scalable, compact and efficient structure that make use of the processing and storage space of a pool of distributed data servers and that they are particularly important in clusters of servers.

Binary Indexed Trees (BITs)

BITs were developed for maintaining the cumulative frequencies which are needed to support dynamic arithmetic data compression <ref name="ref12"> P. M. Fenwick, “A new data structure for cumulative frequency tables,” Softw. Pract. Exper., vol. 24, pp. 327–336, March 1994. [Online]. Available: http://dx.doi.org/10.1002/spe.4380240306 </ref>. A parallel implementation for BITs was proposed in <ref name="habashy">A. M. Elhabashy, A. B. Mohamed, and A. E. N. Mohamad, “An enhanced distributed system to improve the time complexity of binary indexed trees,” in World Academy of Science, Engineering and Technology, 2009, pp. 154–159.</ref> to improve the complexity of the read and update queries. This work was achieved by efficiently partitioning the problem space into small distributed fragments which operate concurrently. The amount of communication between the system fragments has been reduced as much as possible in order to obtain a speed up that is near to the maximum enhancement offered by the distributed parallel system. The authors implemented their proposed system on parallel processors for simulation purposes. The following code <ref name="habashy" /> resembles the function of each processor, where the check_zeros function is to check that all the digits of the idx are set to zeros, and the convert_zeros function is to convert all the variables stated in the range given in its parameter list to zeros.

/* Function of each processor in the distributed BIT */

char *computeIDX(char *idx, bool OP_type, int ordr)

{

if(OP_type==WRITE)

{

if(*idx=='0')

{ if(check_zeros(idx,0,P_order))

return idx;

*idx = '1';

convert_zeros(idx,0,P_order-1);

return idx;

}

return idx;

}

//operation is read

if(*idx == '1')

convert_zeros(idx,0,P_order-1);

return idx;

}

This model reduced the read query to O(Log(Log(N))) and the update query to constant complexity <ref name="habashy" />.

Task Queue

Task queues <ref name="ref4" /> can provide dynamic load balancing of a set of structures used to identify tasks. Task queues have four main functions: insertion of a new task, extraction of an existing task, load balancing and detection of whether the system is idle or not. For the load balancing task, two algorithms have been chosen by the authors. One uses a simple randomized load balancing scheme and the other uses heuristics for locality along with round-robin task pushing. The authors claim that both of them work well in cooperation with the task queue, where locality is not a major concern.

Linear and Dynamic Hashing Based SDDS

Distributed Linear Hashing

Linear hashing, a technique proposed by Witold Litwin in 1988, is a hashing that allows the address space to dynamically expand or contract <ref name="ref14">W. Litwin, “Linear hashing: a new tool for file and table addressing.” in Readings in database systems. San Francisco, CA, USA: Morgan Kaufmann Publishers Inc., 1988, pp. 570–581. [Online]. Available: http://portal.acm.org/citation.cfm?id=48751.48788</ref>. Hence, it allows a file or a table to support any number of deletions or insertions with deteriorating the memory load performance. Linear hashing works by organizing files into buckets; a collection of buckets forms an LH file. Each collection is addressable through a pair of hashing functions.

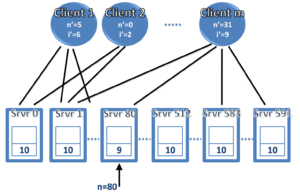

Linear hashing can be applied in a distributed manner <ref name="ref15">W. Litwin, M. A. Neimat, and D. A. Schneider, “Lh: Linear hashing for distributed files,” SIGMOD Rec., vol. 22, pp. 327–336, June 1993. [Online]. Available: http://doi.acm.org/10.1145/170036.170084</ref>. To do so, each bucket is placed at a different server and retains its bucket level in its header (figure 3).

Expansion of linear hashing occurs as an LH file. If a bucket n overflows, it splits and bucket n+1 is created. The definition of a bucket overflow is beyond the scope of this paper (please refer to <ref name="ref14"/> for a detailed description). In addition, if collision occurs, another splitting occurs for the bucket that suffered collision. This scheme was further enhanced by the authors in <ref name="ref16">W. Litwin, M.-A. Neimat, and D. A. Schneider, “Lh*— a scalable, distributed data structure,” ACM Trans. Database Syst., vol. 21, pp. 480–525, December 1996.[Online].Available:http://doi.acm.org/10.1145/236711.236713 </ref> by adding support for load control that performed at no additional message cost for bandwidth preserving. The old mechanism, that lacks load control, gave a load factor of 65-70%. On the other hand, after adding the refinement, the load factor increased to 80-95% regardless of the file size.

Distributed Dynamic Hashing (DDH)

Methods of handling overflows in static hashing increased the retrieval time as files approach their space limits. Dynamic hashing structures have been proposed to eliminate such problems <ref>R. J. Enbody and H. C. Du, “Dynamic hashing schemes,” ACM Comput. Surv., vol. 20, pp. 850–113, July 1988. [Online]. Available: http://doi.acm.org/10.1145/46157.330532</ref>. A distributed approach for dynamic hashing has been proposed and tested. It inherits the idea of dynamic hashing algorithms and apply it to distributed systems <ref name="ref18">R. Devine, “Design and implementation of ddh: A distributed dynamic hashing algorithm,” in Foundations of Data Organization and Algorithms, ser. Lecture Notes in Computer Science, D. Lomet, Ed. Springer Berlin / Heidelberg, 1993, vol. 730, pp. 101–114, 10.1007/3-540-57301-1 7. [Online]. Available: http://dx.doi.org/10.1007/3-540-57301-1 7 </ref>. Its main idea is to spread data across several servers using a new autonomous location discovery technique that replaces the use of a centralized directory for location buckets with eventual learning of bucket locations. It depends on a server splitting mechanism under certain circumstances, where each server decides when to split. Each server retains the following information about each bucket: the bucket number, its split level and the bucket contents <ref name="ref18" />. In addition, each server stores a directory containing information about other server's buckets. When a server receives a request regarding a data item from a client, it checks if it is the correct recipient of the message. This is done by comparing the data item's key to the range of keys of its buckets. If the server finds out that it is not the intended one, it simply forwards the message to the correct recipient. Obviously, one of the problems of this design is that it requires each server to keep track of all other servers for correct message forwarding. Consequently, the server responds to the client with a reply indicating the success status of the operation, which allows the client to update its local perception of the hash table.

In a test that used 50,000 records, almost 200,000 network messages were required to insert and then retrieve all records <ref name="ref18" />. The vast number of messages is deemed the bottleneck of this design. It is clear that the less latency that will be applied by the network, the better the model will perform.

High Performance Multi-attribute access SDDS

Multi-attribute access distributed data structures can occur based on the linear hashing algorithm that is proposed in <ref name="ref15" />. A model was proposed in <ref>W. Litwin and M.-A. Neimat, “k-rp*s: a scalable distributed data structure for high-performance multi-attribute access,” in Parallel and Distributed Information Systems, 1996., Fourth International Conference on, Dec. 1996, pp. 120 –131. </ref>, where data reside on servers that has a global accessing rule. To avoid hot spots in this model, no centralized address directory is to be setup. A client should stay connected to the server as long as a communication stream is still alive. Therefore, any change that happens to the address space while it grows or shrinks are not posted synchronously to the clients. Every client has an image, an exclusive address schema that can be partly outdated with the respect to the actual addressing state. If a client sends a query to an incorrect server (according to the global rule), the server forwards such query to the correct address. When the message reaches the correct server, it should update the client in an Image Adjust Message (IAM) with its correct address. If a server gets overloaded with inserts, it splits and a new server is added.

As can be noticed, there is one common similarity in all of the proposed models that are based on linear hashing which is preserving the address directory in a decentralized fashion. This is considered as the key factor for obtaining high performance and yet scalable distributed data structure.

SDDS using Reed Solomon codes

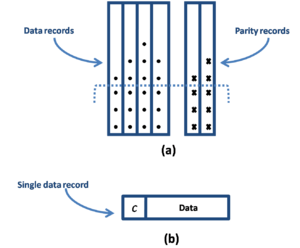

A novel method that used Reed Solomon codes for the parity calculus was proposed by Witold Litwin and Thomas Schwarz <ref name="ref20">W. Litwin and T. Schwarz, “Lh*rs: a high-availability scalable distributed data structure using reed solomon codes,” in Proceedings of the 2000 ACM SIGMOD international conference on Management of data, ser. SIGMOD ’00. New York, NY, USA: ACM, 2000, pp. 237–248. [Online]. Available: http://doi.acm.org/10.1145/342009.335418 </ref>. It used the concept of record grouping to provide scalability and high availability. Record grouping is done as follows: a data record is identified by its key c as well as other non-key part. A linear hash function gives the correct bucket a for the data record c. A bucket group is a successively created bucket; they all have the same size (perhaps not the last one). Bucket a belongs to the group numbered g = <math>frac{a}{m}</math> (integer division). Every bucket group is provided with k<math>\geq</math>1 parity buckets in which the parity records for the group are stored. Figure 4(a) shows a bucket group containing four data buckets plus their data records and two parity buckets plus their parity records.

Each data record has a rank which resembles its position in its data bucket. A record group contains all the data records having the same rank r within the same bucket group. The k parity buckets include parity records for all record groups. A parity record has a rank r of the record group in the first place, then primary keys of all the data records in the record group, then parity data calculated from the data records and the number of the parity bucket.

As for file manipulation ways, the authors included: record recovery, bucket recovery, key search, scan, insert, split, update, deletion and merge. Their simulation proofed that this scheme best fits with data intensive applications.

This model is based on the linear hashing technique (proposed by the same author Litwin), which suffers a drawback; splits must be ordered for the clients since they are required to follow the bucket numbering order in each level. The authors in <ref>X. Ren and X. Xu, “Eh*rs: A high-availability scalable distributed data structure,” in Algorithms and Architectures for Parallel Processing, ser. Lecture Notes in Computer Science, H. Jin, O. Rana, Y. Pan, and V. Prasanna, Eds. Springer Berlin / Heidelberg, 2007, vol. 4494, pp. 188–197, 10.1007/978-3-540-72905-1 17. [Online]. Available: http://dx.doi.org/10.1007/978-3-540-72905-1 17 </ref> proposed a new model that avoided the splitting problem mentioned formerly. Again, they used Reed-Solomon codes to provide scalable, distributed and high availability files that are required by modern applications. An additional storage overhead was required for the RS calculus specific data at each parity bucket server, which is almost minimal for any k-available file. The file structure of this model is pretty much similar to the one proposed by Litwin and Schwarz in <ref name="ref20" />. Finally, the authors claim that their model best fits with data-intensive applications including: database management systems and P2P computing.

SDDS using Record Grouping

High availability can be achieved through record grouping <ref name="ref22">W. Litwin and T. Risch, “Lh*g: a high-availability scalable distributed data structure by record grouping,” Knowledge and Data Engineering, IEEE Transactions on, vol. 14, no. 4, pp. 923 –927, Jul. 2002.</ref>. A group can be defined as a logical structure of up to k records, such that k is a file parameter. The authors in <ref name="ref22" /> proposed such model in which their simulations showed its efficiency and scalability.

Security and Performance Parameters

SDDS allow an application to fetch data in a constant time, even if the node of the user has an outdated view of the system. The drawback of distributed memories is the possibility of one or more unavailable nodes. This possibility grows directly proportional to the number of nodes. As a result, performance parameters and quality measures have to be taken into consideration while building a model of distributed data structures. In addition, distributed data is vulnerable to an unauthorized remote or local access <ref name="ref28"> W. Litwin, M.-A. Neimat, G. Lev, S. Ndiaye, and T. Seck, “Lh*s: a high-availability and high-security scalable distributed data structure,” in Research Issues in Data Engineering, 1997. Proceedings. Seventh International Workshop on, Apr. 1997, pp. 141 –150. </ref>, which makes it useful to secure SDDS schemes for avoiding such unauthorized access. This section reviews proposed research for performance enhancement and monitoring in SDDS as well as models for securing and encrypting stored data.

Parity Checking

Upon the implementation of parity based DDS, two problems arise. First, how to speed up updates while maintaining coherence between user data and parity. Second, how to assure that this coherence is maintained without costly messaging. The authors in <ref name="ref23"> D. Cieslicki, S. Schaeckeler, and T. Schwarz, “Maintaining and checking parity in highly available scalable distributed data structures,” Journal of Systems and Software, vol. 83, no. 4, pp. 529 – 542, 2010. [Online]. Available: http://www.sciencedirect.com/science/article/B6V0N-4XFPPV8-1/2/a5d63c8eb3ad0e81df436cacc50dbfb9 </ref> presented a watermarking solution that can maintain coherence while being consistently and relatively fast. The problem with their proposed model is a new failure mode that arise on a combination of message and site losses; a lost update. The authors claim that their proposed model can also be used in a failure-prone environment like P2P storage.

Data Object Change Detection

Algebraic signatures were proposed as a solution for detecting data object changes <ref name="ref24"> W. Litwin and T. Schwarz, “Algebraic signatures for scalable distributed data structures,” in Data Engineering, 2004. Proceedings. 20th International Conference on, Mar. 2004, pp. 412 – 423. </ref>. They can be applied to SDDS that are based on linear hashing, were the updates that do not change the records at the client can be filtered. This scheme can be further used for fast disk backup of the buckets. The authors in <ref name="ref24" /> use this scheme but replace the 20-byte signature SHA-1 with a 4-byte one. They claim that their algebraic calculus is twice as fast as that of SHA-1.The proposed model of algebraic signatures was later used for string matching <ref name="ref25"> R. Mokadem and W. Litwin, “String-matching and update through algebraic signatures in scalable distributed data structures,” in Database and Expert Systems Applications, 2006. DEXA ’06. 17th International Workshop on, 2006, pp. 708 –711. </ref>. The authors in <ref name="ref25" /> implemented a string matching technique using algebraic signatures and successfully integrated their implementation with the previously proposed model in <ref name="ref24" />.

Dynamic Object Management

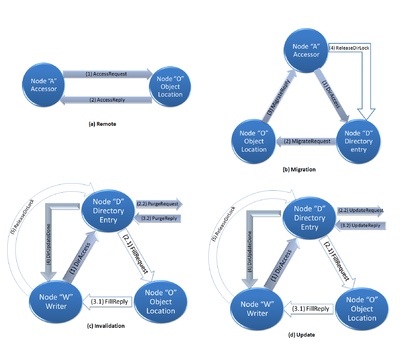

Insightful allocation and access of distributed data can yield substantial performance gain. Arguably, the use of dynamic data management policies can aid the operation of insightful allocation. The authors in <ref name="ref26"> B. Totty and D. Reed, “Dynamic object management for distributed data structures,” in Supercomputing ’92. Proceedings, Nov. 1992, pp. 692 – 701. </ref> proposed a methodology to evaluate the variation of policy performance across algorithms. They defined a set of data management mechanisms, simulated various policies for each of them and analyzed the efficacy of the policies on various parallel scientific benchmarks. A single data management model can lead to great and adequate performance for instruction and uniprocessor data streams respectively. However, on parallel systems, its performance can be very poor. In addition, the interactions of concurrent access techniques are chaotic and dependent to data structure. Access policies alternate messages between nodes of a distributed memory multiprocessor to execute the data management protocol. Four protocols are depicted graphically in figure 5. Figure 5(a) shows the remote policy, which is the simplest policy that initiates a request and a reply message between and accessing node and the one that owns the object data. The other three policies allow constituent object data to dynamically relocate.

The authors (in <ref name="ref26" />) carried experiments that supported the assumption that data structure-specific data management can cause high performance improve over a single policy that is system-imposed. Their experiments also showed that simple yet dynamic policies generate relatively higher memory locality than static placement schemes. Obviously, protocol message count and volume are the payments for such locality increase.

DDS Optimization

An optimization technique for DDS was proposed in <ref name="ref27"> R. Bhoedjang, J. Romein, and H. Bal, “Optimizing distributed data structures using application-specific network interface software,” in Parallel Processing, 1998. Proceedings. 1998 International Conference on, Aug. 1998, pp. 485 –492. </ref>, where the authors showed that high performance gains can be achieved by implementing support for application-specific shared data structures on the network interface processors. They claimed that their customized software (application-specific) significantly reduced the overhead of interactions between the network interface and the host. Moreover, their software made use of application semantics to gain a simple and efficient communication protocol.

Security in SDDS

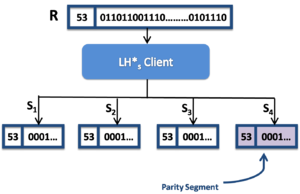

SDDS can be secured by a linear hashing based technique using bit-level stripping, where no record becomes known to an intruder, a site, or a network <ref name="ref28"> W. Litwin, M.-A. Neimat, G. Lev, S. Ndiaye, and T. Seck, “Lh*s: a high-availability and high-security scalable distributed data structure,” in Research Issues in Data Engineering, 1997. Proceedings. Seventh International Workshop on, Apr. 1997, pp. 141 –150. </ref>. Assuming a record R is a key and a sequence of bits B. When a client wants to store R, it proceeds as follows (figure 6):

- k > 1 segments are to be produced. The i-th segment <math>s_i</math> consists from c and from all the bits <math>s'_i</math>:

<math>s'_i = b_i . b_{k+i} . b_{2k+i}...</math>

- The parity segment <math>S_{k+1}</math> is to be produced that also contains c and the parity bits <math>S'_{k+1}</math>. An example for the even parity would be:

<math>s'_{k+1} = b'_{1} . b'_{2} ... b'_{m}</math>

where bit <math>b'_{j}</math> is the parity bit for the string with the j-th bit of each segment; 1<math>\leq</math>j<math>\leq</math> k. If some segments s in R failed to be read, the parity segment allows to reconstruct s.

File <math>S_{i}</math> stores all the segments <math>s_i</math>. The address of segment <math>s_i</math> is calculated from its key c, which is considered the same key R. For SA segment files, each segment having the same record R is usually in the bucket having the same address m within its segment file. The clients retains a single image with the file level <math>i'</math> and the value of the split pointer <math>n'</math> for all <math>S</math>. For SD segment files, the bucket address of the segment differs, hence, there is one image per <math>S</math> on the client and they usually differ too. As known from the distributed linear hashing file, every <math>S</math> expands via splits, tolerates errors due to addressing and sends IAMs to its calling clients. Splits within segment files are not essentially synchronized, hence, even in SA segment files, bucket m in a segment file <math>S_i</math> may split before another bucket m in the segment file <math>S_j;j\neq i</math>. An explanatory reason for this may be because <math>S_j</math> failed when it should split after a new insert. A new bucket in <math>S_i</math> may contain a segment of record R while another segment R is still in bucket m in <math>S_j</math>. Obviously, the address of the segments of a record in their SA segment files may sometimes differ. A common component at a server, namely segment file coordinator (SC), is defined in the whole set of a segment files <math>S</math>, and its address is announced to all servers and clients. It manages the linear hashing file coordination for each <math>S</math> including all the allocation tables. Particularly, SC is alarmed upon the detection of a bucket failure. This alarm may be triggered from a client that failed with a file manipulation or from a server that could neither forwards a message nor could it split. When a failure occurs, SC coordinates spare creation. This method appears attractive in securing of SDDS, which provides fault-tolerance and high data security. As can be noticed, the cost of the described security add-on is the increase of the needed storage for a file and additional control messaging. The access performance of the proposed technique may be affected by scans, specially it it used bit-level string. Finally, security may be traded for attribute-level striping which would yield an improve in the access performance.

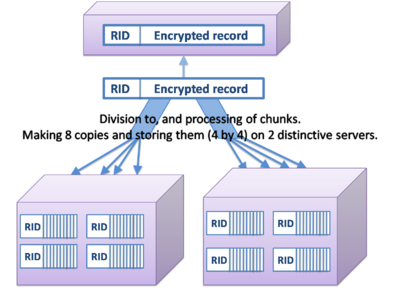

One more security aspect proposal was made by the authors in <ref name="ref29"> T. Schwarz, P. Tsui, and W. Litwin, “An encrypted, content searchable scalable distributed data structure,” in Data Engineering Workshops, 2006. Proceedings. 22nd International Conference on, 2006, p. 18. </ref> for the sake of encrypting the content of an SDDS. Along with the encryption idea, a challenge arises which is how to preserve the capability of parallel search. In <ref name="ref29" />, the authors proposed a scheme that achieves encryption while preserving parallel search capabilities by creating a collection of additional SDDS indices. Those indices are encrypted so that parallel string searches can still be performed at the storage sites. To implement this, a record should consist of a key (acts as a Record Identifier (RI)) and a Record Content Field (RC). The database (which is a collection of records) is stored over multiple sites in strongly encrypted form. The index record can then be generated as follows: an RC is equally chunked such that each chunk is encrypted using Electronic Code Book (ECB) encryption. The ECB encryption can be further strengthened by using lossy compression for removing redundancy. In addition, index records are to be dispersed over multiple sites so as to limit the amount of information available to the attacker at any site. Figure 7 shows this scheme.

The results of testing this work shown the effectiveness of redundancy removal in allowing the index records to appear as more random. The results roughly showed that a chunk size of 6 ASCII characters plus with dispersing index records into 3 records could result in a relatively secure code.

Conclusion

High speed interconnected multicomputers allowed for the research progress in the areas of DDS and SDDS. Fast access to large volumes of data can be provided via a highly available SDDS. In this paper, a survey on various and broad types of DDS and SDDS has been conducted. The paper focused on research outcomes that aimed to implement and analyze distributed versions of classic data structures. Moreover, the paper showed how scalability issues in SDDS are managed and discussed an incomplete set of performance measures in distributed data structures. Most of the presented linear hashing based models agreed on retaining the servers' addresses in a decentralized manner for avoiding bottlenecks or the formation of hotspots. Moreover, some of the listed types achieved great performance whereas others, didn't add that much contribution. All of the mentioned research work agreed on the fact that network latencies of multicomputer impact the performance of any of the proposed distributed model. A comparison of performance has not been done between presented schemes. However, some observations and discussions were made. The survey done in this paper lacks research proposals of distributed data structures that addressed P2P systems as their application. An incomplete set of examples includes: RAQ: A range queriable DDS, G-Grid, DDS for P2P Systems, DDS for Supporting P2P Range Query and Distributed Space Partitioning Tree. A future extension of this work will surely cover the mentioned area.

References

<references/>