COMP 3000 Essay 2 2010 Question 9: Difference between revisions

| Line 128: | Line 128: | ||

=== The Cons === | === The Cons === | ||

The main drawback is efficiency which appears as the authors introduce additional level of abstraction. The everlasting memory/efficiency dispute continues as nesting virtualization enters our lives. The performance hit is mainly imposed by exponentially generated exits which are caused when a nested guest traps, handing control to the lowest level hypervisor, which may hand off the trap to hypervisors above it before finally returning to the guest. So we can see that time-complexity might be an issue when multiple levels of virtualization are involved. | The main drawback is efficiency which appears as the authors introduce additional level of abstraction. The everlasting memory/efficiency dispute continues as nesting virtualization enters our lives. The performance hit is mainly imposed by exponentially generated exits which are caused when a nested guest traps, handing control to the lowest level hypervisor, which may hand off the trap to hypervisors above it before finally returning to the guest. So we can see that time-complexity might be an issue when multiple levels of virtualization are involved, the paper fails to demonstrate that the design is capable of handling a much deeper level of nesting. | ||

Furthermore we observed that the paper performs tests at the L2 level, a guest with two hypervisors below it. It might have been useful to understand the limits of nesting if they investgated higher level of nesting such as L4 or L5. This is because it can be difficult to predict how the system will react to large levels of nesting, because the increase in the number of traps and other performance killing problems can potentially be exponential as the nesting gets deeper. Another significant detriment is that the paper links to optimizations such as vmread/vmwrite operations avoidance which are aimed at specific CPUs as stated on page 7, section 3.5: "(...) this optimization does not strictly adhere to the VMX specifications, and thus might not work on processors other than the ones we have tested". This means that some of the techniques the authors use to increase performance are not reproducible on other systems, and so the generality of parts of their solution may be limited. | Furthermore we observed that the paper performs tests at the L2 level, a guest with two hypervisors below it. It might have been useful to understand the limits of nesting if they investgated higher level of nesting such as L4 or L5. This is because it can be difficult to predict how the system will react to large levels of nesting, because the increase in the number of traps and other performance killing problems can potentially be exponential as the nesting gets deeper. Another significant detriment is that the paper links to optimizations such as vmread/vmwrite operations avoidance which are aimed at specific CPUs as stated on page 7, section 3.5: "(...) this optimization does not strictly adhere to the VMX specifications, and thus might not work on processors other than the ones we have tested". This means that some of the techniques the authors use to increase performance are not reproducible on other systems, and so the generality of parts of their solution may be limited. | ||

Revision as of 06:45, 3 December 2010

Go to discussion for group members confirmation, general talk and paper discussions.

Paper

Authors:

- Muli Ben-Yehuday +

- Michael D. Day ++

- Zvi Dubitzky +

- Michael Factor +

- Nadav Har’El +

- Abel Gordon +

- Anthony Liguori ++

- Orit Wasserman +

- Ben-Ami Yassour +

Research labs:

+ IBM Research – Haifa

++ IBM Linux Technology Center

Website: http://www.usenix.org/events/osdi10/tech/full_papers/Ben-Yehuda.pdf

Video presentation: http://www.usenix.org/multimedia/osdi10ben-yehuda [Note: username and password are required for entry]

Background Concepts

Before we delve into the details of our research paper, its essential that we provide some insight and background to the concepts and notions discussed by the authors.

Virtualization

In essence, virtualization is creating an emulation of the underlying hardware for a guest operating system, program or process to operate on. [1] Usually referred to as a virtual machine, this emulation usually consists of a guest hypervisor and a virtualized environment, giving the guest virtual machine the illusion that its running on the bare hardware. But realistically, the host operating system treats the virtual machine as an application.

The term virtualization has become rather broad, associated with a number of areas where this technology is used: data virtualization, storage virtualization, mobile virtualization and network virtualization. For the purposes and context of our assigned paper, we shall focus our attention on full-virtualization of hardware within the context of operating systems.

Hypervisor

Also referred to as VMM (Virtual machine monitor), a hypervisor is a software module that exists one level above the supervisor and runs directly on the bare hardware to monitor the execution and behaviour of the guest virtual machines. The main task of the hypervisor is to provide an emulation of the underlying hardware (CPU, memory, I/O, drivers, etc.) to the guest virtual machines and to take care of the possible issues that may arise due to the interaction of those guests among one another, and with the host hardware and operating system. The hypervisor also has control of host resources without the host truly knowing which resources the VMM are controlled. [2]

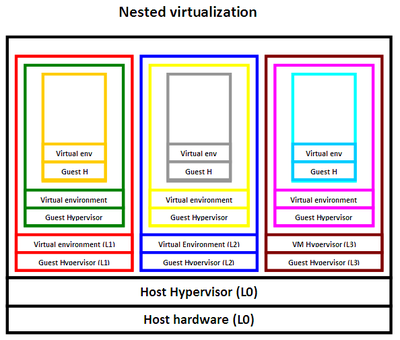

Nested virtualization

Nested virtualization is the concept of recursively running one or more virtual machines inside another virtual machine. For instance, the main operating system hypervisor (L0) can run the virtual machines L1, L2 and L3. In turn, each of those virtual machines is able to run its own virtual machines, and so on (Figure 1).

Protection rings

In modern operating system, there are four levels of access privilge, called Rings, that range from 0 to 3. Ring 0 (root mode) is the most privilged level, allowing access to the bare hardware components. The operating system kernel must execute in Ring 0 in order to access the hardware and secure control. User programs execute in Ring 3 (guest mode). Ring 1 and Ring 2 are dedicated to device drivers and other operations. [7]

Para-virtualization

Para-virtualization is virtualization model that requires the guest OS kernel to be modified in order to allow the model some direct access to the host hardware. In contrast to full-virtualization that we discussed in the beginning of the article, para-virtualization does not simulate the entire hardware, but rather it relies on a software interface that is implemented in the guest kernel to allow privileged hardware access via special instructions called hypercalls. The advantage here is that there are fewer environment switches and interaction between the guest and host hypervisors, thus more efficiency. However, portability is an obvious issue, since a system can be para-virtualized to be compatible with only one hypervisor. Another thing to note, is that some operating systems such as Windows don't support para-virtualization. [3]

x86 models of virtualization

Trap and emulate model

The trap and emualte model is based on the idea that when a guest virtual machine attempts to execute privileged instructions, it triggers a trap or a fault that goes down to level L0, where the host hypervisor resides. Since the host hypervisor is the only one capable of executing privileged instructions at Ring 0, it handles this trap caused by the guest and provides an emulation of the desired instruction to the guest. This way, the guest will gain Ring 0 privilege through the help of the hypervisor. But it is important to know that the guest is unaware of this emulation and it operates as if it was running on the bare hardware.

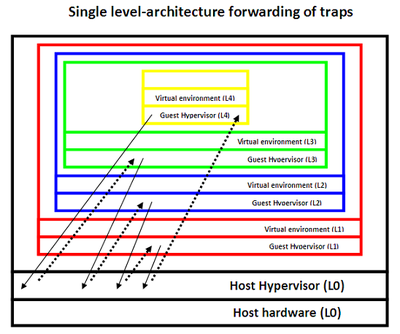

Single-level architecture

The x86 based systems are based on the single-level of architecture virtualization support. In this hardware model, the host hypervisor L0 (running in Ring 0) handles all traps caused by any guest hypervisor running at any level of the virtualization stack. Assume that host hyperrvisor (L0) runs L1. When L1 attempts to run its own virtual machine called L2, this causes a trap that goes down to host hypervisor at level L0, L0 then handles the trap and initiates the required emulation for L1 to create L2. More generally, every trap occuring at level Ln, causes a drop to the L0 level where the host hypervisor resides. The host hypervisor then forwards this trap to the parent of Ln which is Ln-1, which in turn causes the trap to go down to L0 again, and so on. This trap handling switches keeps occurring until the desired emulated result reaches Ln to allow him the privilege to execute (Figure 2).

The uses of nested virtualization

Compatibility

A user can run a particular application or OS that is not compatible with the existing or running OS as a virtual machine. Operating systems could also provide the user with a compatibility mode of other operating systems or applications. An example of this is the Windows XP mode that is available in Windows 7, where Windows 7 runs Windows XP as a virtual machine.

Cloud computing

A cloud provider, more fomally referred to as Infrastructure-as-a-Service (IAAS) provider, could use nested virtualization to give the ability to customers to host their own preferred user-controlled hypervisors and run their virtual machines on the provider hardware. This way both sides can benefit, the provider can attract customers and customers can have the freedom of implementing their systems on the host hardware without worrying about compatibility issues. [9]

The most well known example of an IAAS provider is Amazon Web Services (AWS). AWS presents a virtualized platform for other services and web sites to host their API and databases on Amazon's hardware.

Security

We can also use nested virtualization for security purposes. One common example is virtual honeypots. A honeypot is a hollow program or network that appears to be functioning to outside users, but in reality, it is only there as a security tool to watch or trap hacker attacks. By using nested virtualization, we can create a honeypot of our system as virtual machine and see how our virtual system is being attacked or what kind of features are being exploited. We can take advantage of the fact that those virtual honeypots can easily be controlled, manipulated, destroyed or even restored. [15]

Migration/Transfer of VMs

Nested virtualization can also be used in live migration or transfer of virtual machines in cases of upgrade or disaster recovery. Consider a scenario where a number of virtual machines must be moved to a new hardware server for upgrade. Instead of moving each VM separately, we can nest those virtual machines and their hypervisors to create one nested entity that is easier to deal with and more manageable. In the last couple of years, virtualization packages such as VMWare and VirtualBox have adapted this notion of live migration and developed their own embedded migration/transfer agents. [8]

Testing

Using virtual machines is convenient for testing, evaluation and bechmarking purposes. Since a virtual machine is essentially a file on the host operating system, if it is corrupted or damaged, it can easily be removed, recreated or even restored since a snapshot of the running virtual machine can be created and restored.

Research problem

Nested virtualization has been studied since the mid 1970s [4]. Early reasearch in the area assumes that there is hardware support for nested virtualization. Actual implementations of nested virtualization, such as the z/VM hypervisor in the early 1990s, also required architectural support. Other solutions assume the hypervisors and operating systems being virtualized have been modified to be compatabile with nested virtualization. There have recently been software based solutions made available [5], however, these solutions suffer from significant performance problems.

The main barrier to having nested virtualization without architectural support is that, as you increase the levels of virtualization, the number of control switches between the different levels of hypervisors increases. A trap in a highly nested virtual machine first goes to the bottom level hypervisor, which can send it up to the second level hypervisor, which can in turn send it up (or back down), until it arrives at potentially the worst case possible, where it reaches the hypervisor that is one level below the virtual machine itself. The trap instruction can be bounced between different levels of hypervisor, which results in one trap instruction multiplying to many trap instructions.

Generally, solutions that require architectural support and specialized software for the guest machines are not practically useful because this support does not always exist, such as on x86 processors. Solutions that do not require this suffer from significant performance costs because of how the number of traps expands as nesting depth increases. This paper presents a technique to reconcile the lack of hardware support on available hardware with efficiency. It is, for the most part, able to contain the problem of a single nested trap expanding into many more trap instructions, at least for the nesting depth the authors considered, which allows efficient virtualization without architectural support.

More specifically, virtualization deals with how to share the resources of the computer between multiple guest operating systems. Nested virtualization must share these resources between multiple guest operating systems and guest hypervisors. The authors acknowledge the CPU, memory, and IO devices as the three key resources that they need to share. Combining this, the paper presents a solution to the problem of how to multiplex the CPU, memory, and IO efficiently between multiple virtual operating systems and hypervisors on a system which has no architectural support for nested virtualization.

Contribution

The non stop evolution of computers entices intricate designs that are virtualized and harmonious with cloud computing. The paper contributes to this belief by allowing consumers and users to inject machines with their choice of hypervisor/OS combination that provides grounds for security and compatibility. The sophisticated abstractions presented in the paper such as shadow paging and isolation of a single OS resources authorize programmers for further development and ideas which use this infrastructure. For example, the paper Accountable Virtual Machines wraps programs around a particular state VM which could be most definitely placed on a separate hypervisor for ideal isolation.

Theory

The fundamental idea of the Turtles Project relies on multiplexing the hardware among the involved guest virtual machines. When a virtual machine like L1 attempts to run L2, this triggers a trap that gets handled by L0, just as we illustrated earlier in the single-hardware architecture model. This trap includes the environment specifications that are needed to run L2 on the bare hardware. L0 converts L2's virtual memory to L1's virtual memory to make them run on the same level. Thus, the approach followed ends up flattening the virtualization levels to run them as L1 level virtual machines.

However, the following question should be asked: if the host hypervisor ends up running and multiplexing the hardware among the guest virtual machines, then how can we keep track of the virtualization levels? The answer lies within the host hypervisor. By using special control structures like the VMCS and the VMCB, the hypervisor has the ability to diffrentiate between the diffrent levels and keep track of each parent and guest at each level.

CPU Virtualization

L0(the lowest most hypervisor) runs L1 with VMCS0->1(virtual machine control structure).The VMCS is the fundamental data structure that hypervisor per pars, describing the virtual machine, which is passed along to the CPU to be executed. L1(also a hypervisor) prepares VMCS1->2 to run its own virtual machine which executes vmlaunch. vmlaunch will trap and L0 will have the handle the trap because L1 is running as a virtual machine do to the fact that L0 is using the architectural mode for a hypervisor. So in order to have multiplexing run, L2 must execute as a virtual machine of L1. So L0 merges the VMCS's; VMCS0->1 merges with VMCS1->2 to become VMCS0->2(enabling L0 to run L2 directly). L0 will now launch a L2 which can cause it to trap. L0 handles the trap itself or will forward it to L1 depending if it L1 virtual machines responsibility to handle. The way it handles a single L2 exit, L1 would need to read and write to the VMCS disable interrupts. This normally wouldn't be a problem but because it is running in guest mode as a virtual machine, all the operation traps leading to a signal high level L2 exit or L3 exit causes many exits(more exits equal less performance). Problem was corrected by making the single exit fast and reduced frequency of exits with multi-dimensional paging. In the end L1 or L0 base on the trap will finish handling it and resumes L2. This process is repeated over again contentiously.

Memory virtualization

The main idea with n = 2 nest virtualization is that there are three logical translations: L2 to Virtual to physical address, from an L2 physical to L1 physical and form a L1 physical to L0 physical address. There are three levels of translations. However, there is only 2 MMU page tables in the Hardware that called EPT, which takes virtual to physical and guest physical to host physical. They compress the three translations onto the two tables going from the being to end in two jumps instead of three. This is done by shadow page table for the virtual machine and shadow-on-EPT. The Shadow-on-EPT compress three logical translations to two pages. The EPT tables rarely change where the guest page table changes frequently. L0 emulates EPT for L1 and it uses EPT0->1 and EPT1->2 to construct EPT0->2. This process results in fewer exits.

I/O virtualization

There are 3 fundamental way to virtual machine access the I/O: Device emulation [10], Para-virtualized drivers which knows it on a driver [11][12] and Direct device assignment, [13][14] which results in the best performance. To get the best performance, they used a IOMMU for safe DMA bypass. With nested 3X3 options for I/O virtualization, they had the many options but they used multi-level device assignment giving L2 guest direct access to L0 devices bypassing both L0 and L1. To do this, they had to memory map I/O with program I/0 with DMA with interrupts. The idea with DMA is that of each hypervisor L0, L1 needs to use a IOMMU to allow its virtual machine to access the device in order to bypass safety. There is only one plate for IOMMU so L0 needs to emulate an IOMMU. L0 will then compress the Multiple IOMMU into a single hardware IOMMU page table so that L2 programs the device directly. The device DMA's are stored into the L2 memory space directly.

Macro optimizations

The two main places where guest of a nested hypervisor is slower than the same guest running on a bare metal hypervisor are the second transition between L1 to L2 and the exit handling code running on the L1 hypervirsor. Since L1 and L2 are assumed to be unmodified, the required changes were found in L0 only. They optimized the transitions between L1 and L2. This involves an exit to L0 and then an entry. In L0, the most time is spent in merging VMC's, so they optimize this by copying data between VMC's if it is being modified. They carefully balance full copying versus partial copying and tracking. The VMCs are optimized further by copying multiple VMC fields at once. Normally, by intel's specification read or writes must be performed using the vmread and vmwrite instruction (operate on a single field). VMC's data can be accessed without the ill side-effects by bypassing vmread and vmwrite and copying multiple fields at once with large memory copies. This may not work on processors other than the ones that were used in testing. The main cause of this slowdown exit handling are additional exits caused by privileged instructions in the exit-handling code. vmread and vmwrite are used by the hypervisor to change the guest and host specification ( causing L1 exit multiple times while it handles a single L2 exit). By using AMD SVM, the guest and host specifications can be read or written to directly using ordinary memory loads and stores( L0 does not intervene while L1 modifies L2 specifications).

Critique

The Pros

The paper unequivocally demonstrates strong input in the area of virtualization and data sharing within a single machine. It is aimed at programmers, and should not have too large of an effect on an end user running an application in a nested virtual machine. This is especially true if the user is using the system at a low depth. One can further argue that the most common use cases for nested virtualization that the authors mention in section 1, such as virtualizing OSs that are already hypervisors (like windows 7) and hypervisors in the cloud, will be at a shallow depth. It then follows that the testing the authors do in section 4 covers the most common use cases, so users can expect similar impressive performance. Nevertheless contribution is visible with respect to security and compatibility. On the security side, this nested virtualization technique can be used to study hypervisor level rootkits, such as bluepill [6], by hosting an infected hypervisor as a guest on top of another hypervisor. Since this is the first successful implementation of this type that does not modify hardware (there have been half decent research designs), we expect to see increased interest in the nested integration model described above. The framework makes for convenient testing and debugging due to the fact that hypervisors can function inconspicuously towards other nested hypervisors and VMs without being detected. Moreover the efficiency overhead is reduced to 6-10% per level thanks to optimizations such as ommited vmwrites and direct paging (multi level paging technique) which sounds very appealing.

The Cons

The main drawback is efficiency which appears as the authors introduce additional level of abstraction. The everlasting memory/efficiency dispute continues as nesting virtualization enters our lives. The performance hit is mainly imposed by exponentially generated exits which are caused when a nested guest traps, handing control to the lowest level hypervisor, which may hand off the trap to hypervisors above it before finally returning to the guest. So we can see that time-complexity might be an issue when multiple levels of virtualization are involved, the paper fails to demonstrate that the design is capable of handling a much deeper level of nesting.

Furthermore we observed that the paper performs tests at the L2 level, a guest with two hypervisors below it. It might have been useful to understand the limits of nesting if they investgated higher level of nesting such as L4 or L5. This is because it can be difficult to predict how the system will react to large levels of nesting, because the increase in the number of traps and other performance killing problems can potentially be exponential as the nesting gets deeper. Another significant detriment is that the paper links to optimizations such as vmread/vmwrite operations avoidance which are aimed at specific CPUs as stated on page 7, section 3.5: "(...) this optimization does not strictly adhere to the VMX specifications, and thus might not work on processors other than the ones we have tested". This means that some of the techniques the authors use to increase performance are not reproducible on other systems, and so the generality of parts of their solution may be limited.

The Style and Presentation

The paper presents an elaborate description of the concept of nested virtualization in a very specific manner. It does an excellent job conveying the technical details. The paper does seem to assume a high level of background knowledge and familiarity with the subject, especially with some more technical points of the architecture the hardware uses to implement virtualization. For example, the paragraph 4.1.2 "Impact of Multidimensional paging" attempts to illustrate the technique by an example with terms such as ETP and L1, which may not be familiar to people not used to the technical language. The paper does, however, touch on a wide range of topics in the field of virtualization, including CPU, Memory and IO device virtualization. This wide scope means that many of the major components of virtualization are discussed, so in the process of understanding the paper one learns a lot about many different parts of the field.

Conclusion

The research showed in the paper is the first to achieve efficient x86 nested-virtualization without altering the hardware, relying on software-only techniques and mechanisms. This is a major improvement over the current available solutions, and the techniques used to achieve nested virtualization are comprehensive and interesting. It also has good potential as a basis for future research. The authors refer to security and clouds as two potential areas for future research, another interesting area could be how the approaches the authors apply, the way they compress multiple levels of abstraction into one level with multi-dimensional paging and device assignment, could be applied to other problems that involve nesting. The paper also won the best paper award at the conference, further reflecting its quality.

References

[1] Tanenbaum, Andrew (2007). Modern Operating Systems (3rd edition), page 569.

[2] Popek & Goldberg (1974). Formal requirements for virtualizable 3rd Generation architecture, section 1: Virtual machine concepts

[3] Tanenbaum, Andrew (2007). Modern Operating Systems (3rd edition), page 574-576.

[4] Goldberg, P. Architecture of Virtual Machines. In Proceedings of the Workshop on Virtual Computer Systems, ACM pp. 74-112

[5] Berghmans, O. Nesting Virtual Machines in Virtualization Test Frameworks. Master's Thesis, Unversity of Antwerp, 2010.

[6] Presentation by Joanna Rutkowska, Black Hat Briefings 2006.

[7] Buytaert, Dittner & Rule. The best damn server virtualization book period. pages 16-18

[8] Clark, Fraser, Hand, Hansen, Jul, Limpach, Pratt & Warfield. Live migration of virtual machines. page 273-286

[9] Gillam, Lee (2010). Cloud Computing: Principles, Systems and Applications. page 26-27

[10] Sugerman, Venkitachalm & Lim. (2001). Virtualizing I/O devices on VMware workstation’s hosted virtual machine monitor.

[11] Russell, Rusty (2008). virtio: towards a de-facto standard for virtual I/O devices

[12] Barham, Dragovic, Fraser, Hand, Harris, Ho, Neugebauer, Pratt & Warfield (2003). Xen and the art of virtualization

[13] Levasseur, Uhlig, Stoess & Gotz (2004). Unmodified device driver reuse and improved system dependability via virtual machines.

[14] Yassour, Ben-Yehuda & Wasserman (2008). Direct device for untrsuted fully-virtualized virtual machines

[15] Proceedings of the 5th European Conference on Information Warfare and Security : National Defence College, Helsinki, Finland, 1 - 2 June 2006. p 245-246